Tech Behind Spotify Wrapped Archive 2025

Here’s the third article for CTRL+BREAK:

https://engineering.atspotify.com/2026/3/inside-the-archive-2025-wrapped

And as always, huge thanks to the original authors — this one was a masterclass in large-scale AI systems, evaluation, data modeling, and launch engineering.

ordered by narrative potential + statistical strength

Top Artist Day

Top Genre Day

Top Podcast Day

Most Nostalgic

Most Unusual

New Year’s Day

expensive, high quality

human curated

fast + cheap (DPO)

data-driven

brand-safe tone

logs + stats + country

prev reports (avoid repetition)

per-user sequence, massive parallelism

column-based, parallel writes

LLM judge • accuracy • safety • tone • format

trace → fix → replay

global big-bang • no cold start ✓

Short Summary

Tech Behind Spotify Wrapped Archive 2025

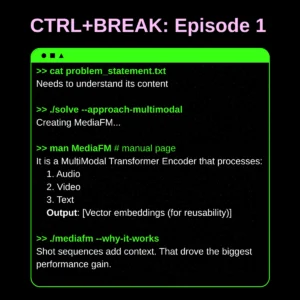

Spotify’s Wrapped Archive 2025 identifies up to five “remarkable days” from a listener’s entire year using a ranked set of heuristics like Biggest Music Day, Discovery Day, Most Nostalgic Day, and deviations from personal taste. After computing these days for ~350M users, Spotify generated 1.4 billion AI-written stories, each created by a fine-tuned, optimized model distilled from a frontier LLM.

The pipeline includes heuristic ranking, a two-layer prompting system, a fully parallel report generator, a column-oriented storage model for safe concurrent writes, and automated LLM-based evaluation for quality control. Wrapped’s global “big bang” launch required aggressive pre-scaling and synthetic load testing across regions to ensure no cold starts.

Wrapped Archive demonstrates how AI storytelling, data engineering, infra scaling, and safety loops come together to ship a feature for hundreds of millions of users.